Learning What and Where to Draw

Scott Reed, Zeynep Akata, Santosh Mohan,

Samuel Tenka, Bernt Schiele and Honglak Lee

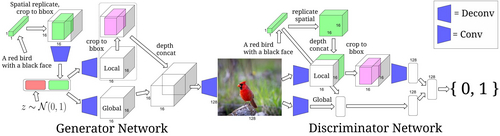

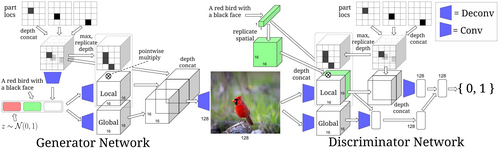

Generative Adversarial Networks (GANs) have recently demonstrated the capability to synthesize compelling real-world images, such as room interiors, album covers, manga, faces, birds, and flowers. While existing models can synthesize images based on global constraints such as a class label or caption, they do not provide control over pose or object location. We propose a new model, the Generative Adversarial What-Where Network (GAWWN), that synthesizes images given instructions describing what content to draw in which location. We show high-quality 128 x 128 image synthesis on the Caltech-UCSD Birds dataset, conditioned on both informal text descriptions and also object location. Our system exposes control over both the bounding box around the bird and its constituent parts. By modeling the conditional distributions over part locations, our system also enables conditioning on arbitrary subsets of parts (e.g. only the beak and tail), yielding an efficient interface for picking part locations. We also show preliminary results on the more challenging domain of text- and location-controllable synthesis of images of human actions on the MPII Human Pose dataset.

Paper, Code and Data

- If you use our code, please cite:

@inproceedings {RAMTSL16,

title = {Learning What and Where to Draw},

booktitle = {Neural Information Processing Systems (NIPS)},

year = {2016},

author = {Scott Reed and Zeynep Akata and Santosh Mohan and Samuel Tenka and Bernt Schiele and Honglak Lee} }

title = {Learning What and Where to Draw},

booktitle = {Neural Information Processing Systems (NIPS)},

year = {2016},

author = {Scott Reed and Zeynep Akata and Santosh Mohan and Samuel Tenka and Bernt Schiele and Honglak Lee} }