Questioning the Status Quo in Video Streaming

The majority of today's Internet traffic is delivered via content delivery networks (CDNs). The ever-increasing end-user demands for bandwidth and latency seem to assure that the volume of content delivered through CDNs will only increase going forward. Typically, the CDN hauls data from origins (i.e., content providers) to its back-end servers, moves this data (over a sophisticated overlay network) to its front-end servers, and serves the data from there to the end users. If we focus on the path taken by content from origins to end users via a CDN a simple fact becomes apparent: a significant fraction of this path traverses the CDN infrastructure, between the back-end and front-end servers. The server-to-server “landscape” formed by these paths is increasingly becoming “longer,” as front-end servers are being deployed closer to end users to minimize the last mile latency. Since CDNs have been expanding their infrastructure by deploying more servers in diverse networks and geographic locations, the landscape is also becoming “wider.”

The server-to-server landscape provides a few unique perspectives as well as opportunities for solving some long-standing networking problems in the Internet. The endpoints (or servers) in this landscape are within the control of a single organization (i.e., the CDNs that owns the servers), albeit the paths between the endpoints might still be subject to the vagaries of the larger Internet. It is possible, hence, to design, experiment, and validate network protocols on this landscape that would otherwise be infeasible to be deployed, even for testing, in the Internet. Additionally, suppose CDNs control the software on end-user machines (e.g., a JavaScript-based application that fetches Web objects), we can also revisit some of the sub-optimal protocol choices in delivering content, e.g., video, to end users that have so far remained ossified.

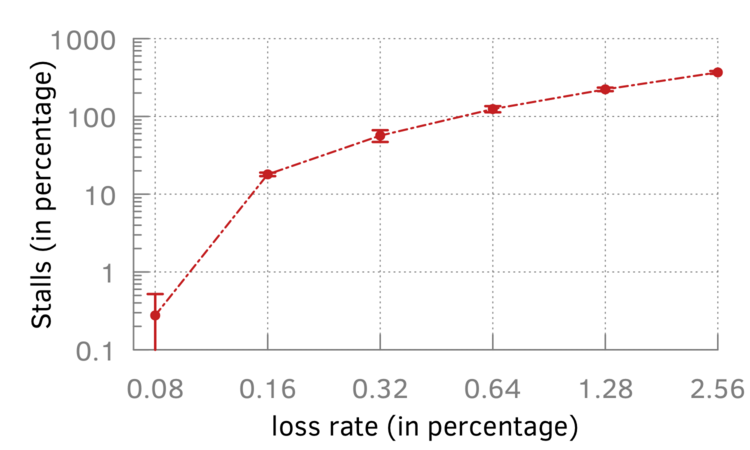

Today, TCP is the dominant transport protocol for video streaming, due to the widespread use of dynamic adaptive streaming over HTTP (DASH). The rich body of prior work on optimizing TCP, adaptive bitrate selection algorithms, or TCP variants, however, highlights TCP's shortcomings. Our preliminary investigation reveals that even at a loss rate of 0.16% – lower than that typically observed in the Internet – the video player spends 20% of the total video time in stalls (i.e., in waiting for the lost packets to arrive at the playback buffer) when streaming using TCP. But with CDNs (e.g., Akamai Technologies) and popular Web browsers (e.g., Google Chrome) already supporting QUIC (a recent transport protocol from Google currently being standardized by IETF), it is worth revisiting this status quo in streaming.

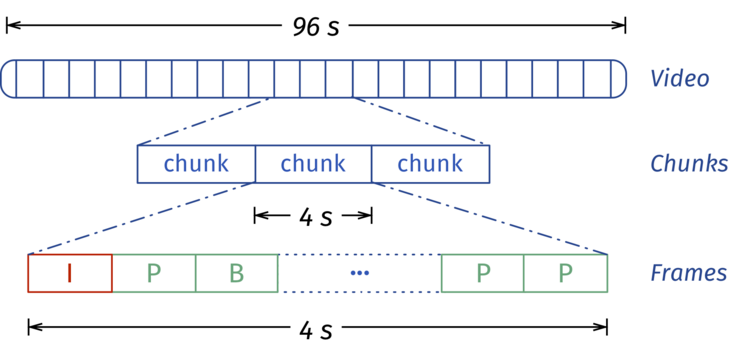

To encode a video using H.264 (the most widely used video codec in the Internet) and stream it via DASH, the video data is split into chunks. Each chunk contains the same number of video frames (except, perhaps, the last chunk, which might contain fewer frames) as shown in the illustration. Often the video is encoded at different qualities (i.e., at different bitrates and/or resolutions) and details (e.g., quality levels and names of files associated with each level) are persisted in a manifest file. Clients (or video players) first fetch the manifest file, and download the video chunk by chunk. Prior to fetching each chunk, adaptive bitrate (ABR) algorithms in the video player determine the quality level of that chunk based on inferred network conditions. If the stream experiences congestion along the path between the server and client, the ABR might, for instance, fetch the next chunk at a lower quality and avoid stalling (i.e., pausing) the video stream. To allow fast switching, the chunk duration is commonly in the range of two to ten seconds.

A simple observation highlights that reliable transports are ill-suited for video streaming: Video data consists of different types of frames, some types of which do not require reliable delivery. The loss of some types of frames has minimal or no impact (since such losses can be recovered) on the end-user quality of experience (QoE). Therefore, by adding support for unreliable streams in QUIC and offering a selectively reliable transport, wherein not all video frames are delivered reliably, we can optimize video streaming and improve end-user experiences. This approach has several advantages: (a) it builds atop QUIC that is rapidly gaining adoption; and (b) it involves only a simple, backward compatible, incrementally deployable extension – support for unreliable streams in QUIC.

Our current focus is on a thorough examination of the use of unreliable streams for video streaming and the interplay between such a partially reliable transport with the application-layer ABR schemes. To this end, we are collaborating with researchers from University of Massachusetts, Amherst and Akamai Technologies to design a practical, scalable solution for video streaming.