What makes for effective detection proposals?

J. Hosang, R. Benenson, Piotr Dollár, B. Schiele

Papers

Please cite our paper, when you're using our data. :)

-

J. Hosang, R. Benenson, P. Dollár, and B. Schiele.

What makes for effective detection proposals?

PAMI 2015.

arXiv@ARTICLE{Hosang2015Pami, author = {J. Hosang and R. Benenson and P. Doll\'ar and B. Schiele}, title = {What makes for effective detection proposals?}, journal = {PAMI}, year = {2015} } -

J. Hosang, R. Benenson, and B. Schiele.

How good are detection proposals, really?

BMVC 2014.

PDF, arXiv@INPROCEEDINGS{Hosang2014Bmvc, author = {J. Hosang and R. Benenson and B. Schiele}, title = {How good are detection proposals, really?}, booktitle = {BMVC}, year = {2014} }

Data & Code

If you're interested in benchmarking your proposal detection method or in the code or data please contact me:Jan Hosang

I'm in the process of preparing data and code for release. You can find everything that is available so far below. Please let me know if you're missing something!

- You can find the code at GitHub

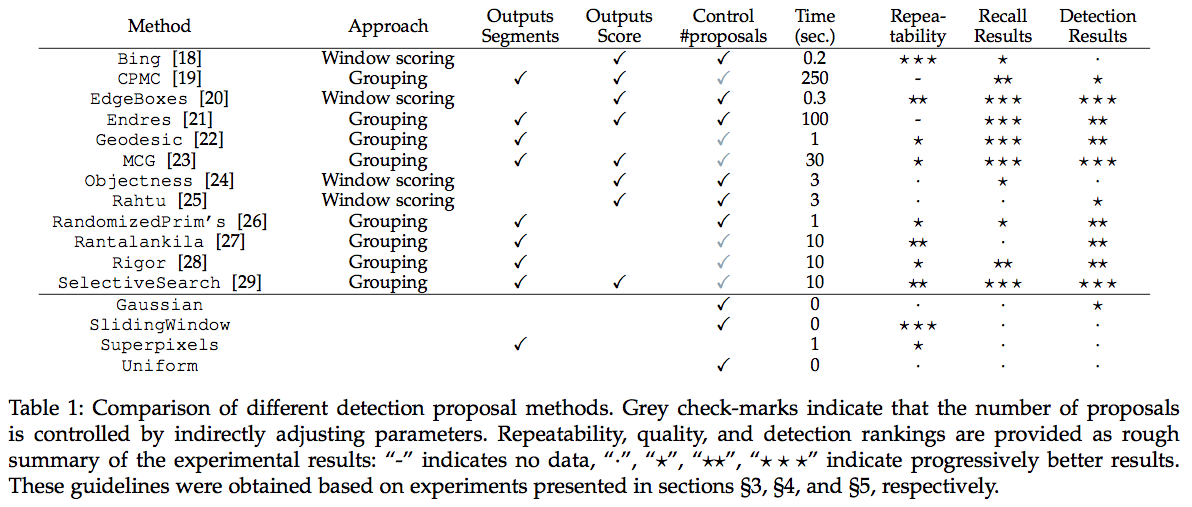

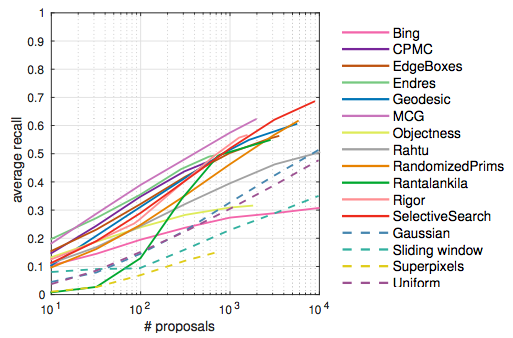

- For the recall experiments (see Section 4 in the paper), we run twelve detection proposal methods and four baseline methods over the pascal test set.

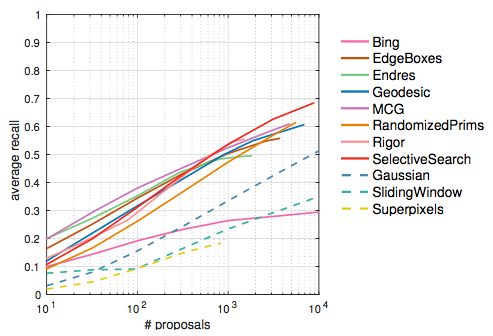

- We also do recall experiments on ImageNet (see Section 4 in the paper).

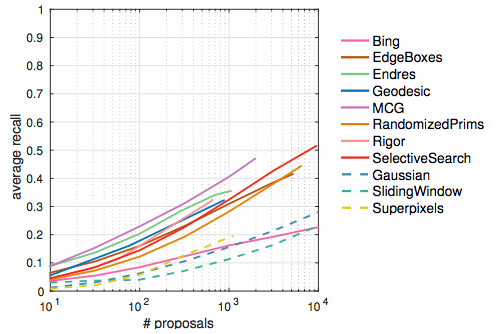

- Data from the recall experiments on COCO (see Section 4 in the paper).

- For repeatability experiments (see Section 3 in the paper), we applied a number of perturbations to the pascal test set.

- Here, you can download the transformed pascal images (70GB, md5sum: 5a3095a3ed893e098717fa9c88e19929)

- You can download the result of the matching of proposals on the transformed pascal images to reproduce the plots (133MB, md5sum: 28a1722caf9e37b011d8b52ea24a7220)

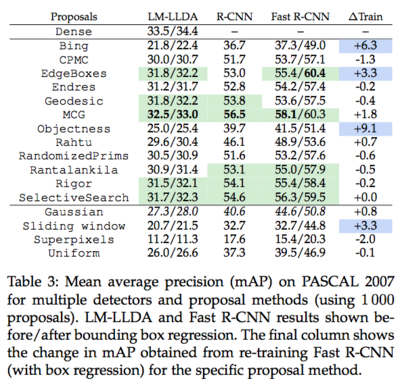

Detection performance with LM-LLDA and R-CNN on PASCAL VOC 2007