KnowFi: Knowledge Extraction from Long Fictional Texts

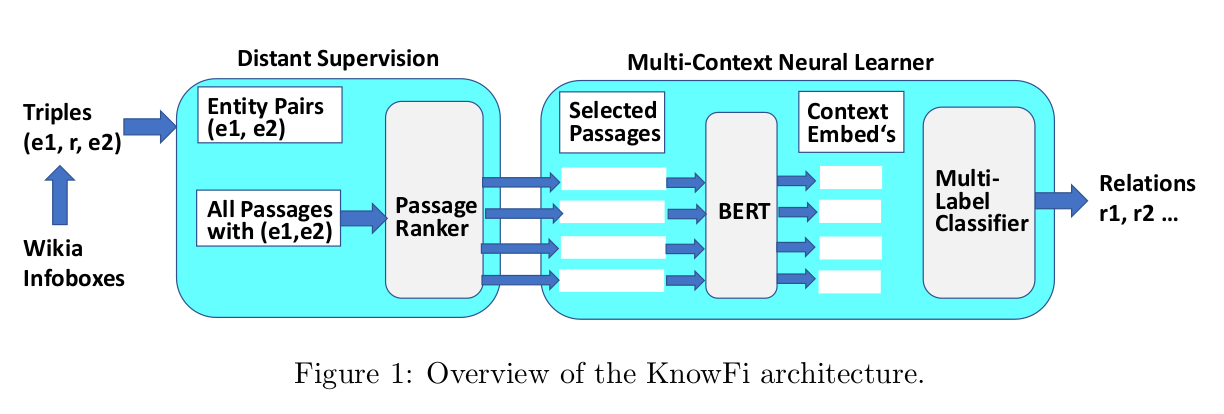

Knowledge base construction has recently been extended to fictional domains like multi-volume novels and TV/movie series, aiming to support explorative queries for fans andsub-culture studies by humanities researchers. This task involves the extraction of rela-tions between entities. State-of-the-art methods are geared for short input texts and basicrelations, but fictional domains require tapping very long texts and need to cope with non-standard relations where distant supervision becomes sparse. This work addresses thesechallenges by a novel method, called KnowFi, that combines BERT-enhanced neural learn-ing with judicious selection and aggregation of text passages. Experiments with severalfictional domains demonstrate the gains that KnowFi achieves over the best prior methodsfor neural relation extraction.

Publication(s)

KnowFi: Knowledge Extraction from Long Fictional Texts

Cuong Xuan Chu, Simon Razniewski, Gerhard Weikum

In Proc. AKBC 2021