TACoS Multi-Level Corpus

Abstract

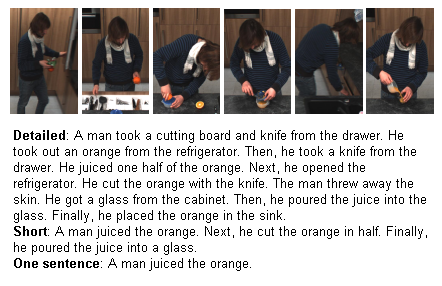

Existing approaches for automatic video description focus on generating single sentences at a single level of detail. We address both of these limitations: for a variable level of detail we produce coherent multi-sentence descriptions of complex videos featuring cooking activities. To understand the difference between detailed and short descriptions, we collect and analyze a video description corpus with three levels of detail.

Corpus

This site hosts the TACoS Multi-Level corpus presented in Coherent Multi-Sentence Video Description with Variable Level of Detail [1].

Please contact us if you have questions

Download

License

The data is only to be used for scientific purposes and must not be republished other than by the Max Planck Institute for Informatics. The scientific use includes processing the data and showing it in publications and presentations. When using it please cite [1].

TACoS Multi-Level

- Corpus (9.3 MB)

Video data

Help & Contact

If you need any help or have any suggestions feel free to contact Anna Rohrbach and Marcus Rohrbach.

We are looking forward to hear from your success of using the dataset.

Feel free to subsribe to our mailing list to get updates (cookingactivities-join@lists.mpi-inf.mpg.de).

Related datasets of our group

- Saarbrücken Corpus of Textually Annotated Cooking Scenes (short: TACoS) textual descriptions for MPII Cooking Composite Activities on a single level. An earlier work, with only a single level of descriptions, but additional sentence similarity annotations.

- MPII Multi-Kinect Dataset: Multi-view kinect object classification, recorded in the same kitchen.

Change log

- 15/01/18: release of version 1.0

References

[1] Coherent Multi-Sentence Video Description with Variable Level of Detail, A. Rohrbach, M. Rohrbach, W. Qiu, A. Friedrich, M. Pinkal and B. Schiele, GCPR, (2014)

[2] Grounding Action Descriptions in Videos, M. Regneri, M. Rohrbach, D. Wetzel, S. Thater, B. Schiele and M. Pinkal, Transactions of the Association for Computational Linguistics (TACL), Volume 1, p.25-36, (2013)

[3] Translating Video Content to Natural Language Descriptions, M. Rohrbach, W. Qiu, I. Titov, S. Thater, M. Pinkal and B. Schiele, IEEE International Conference on Computer Vision (ICCV), December, (2013)