Xplore-M-Ego: Contextual Media Retrieval Using Natural Language Queries

Sreyasi Nag Chowdhury, Mateusz Malinowski, Andreas Bulling, and Mario Fritz

Abstract

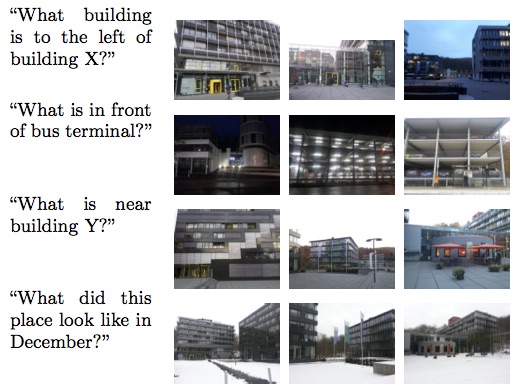

The widespread integration of cameras in hand-held and head-worn devices as well as the ability to share content online enables a large and diverse visual capture of the world that millions of users build up collectively every day. We envision these images as well as associated meta information, such as GPS coordinates and timestamps, to form a collective visual memory that can be queried while automatically taking the ever-changing context of mobile users into account. As a first step towards this vision, in this work we present Xplore-M-Ego: a novel media retrieval system that allows users to query a database of contextualised images using spatio-temporal natural language queries. We evaluate our system using a new dataset of synthesised and real image queries as well as through a usability study. One key finding is that there is a considerable amount of inter-user variability, for example in the resolution of spatial relations in natural language utterances.

References

[1] Xplore-M-Ego: Contextual Media Retrieval Using Natural Language Queries. S. N. Chowdhury, M. Malinowski, A. Bulling, and M. Fritz. ICMR'16 (Short Paper).

[2] Xplore-M-Ego: Contextual Media Retrieval Using Natural Language Queries. S. N. Chowdhury, M. Malinowski, A. Bulling, and M. Fritz. Technical Report.

[3] Contextual Media Retrieval Using Natural Language Queries. S. N. Chowdhury. Master's Thesis. 2015.

Dataset

Source Code

The following (exemplar) source files are compatible with the DCS Parser (Percy Liang et. al.), and are required to reproduce results from the paper.

- Collective Visual Memories

- Schema

- Media Predicates

- Lexicon